In an article at (http://www.math.columbia.edu/~woit/wordpress/?p=5241 ), it wrote, “The latest Scientific American has a cover story about particle physics…. It’s called “The Inner Life of Quarks” and discusses models in which quarks and other elementary particles of the standard model are composites of more elementary objects called “preons”. The fact that the papers on the subject it refers to are from 1979 should make one suspicious: an idea that hasn’t had major developments in 33 years is a dead idea. Besides the overwhelming experimental evidence against preons (with the LHC bringing in many new much stronger negative results), the idea has huge inherent problems. The main issue is that one is trying to put together composites with masses as small as MeVs (or lower, if you try to do this with neutrinos) while the data says that things are point-like up to TeV scales, with just the forces you know about up to such scales.

The following is the summary of Scientific American’s article.

1. In 1869 Dmitri Mendeleev created the periodic table of chemical elements by noticing that elements' properties fit into a repeating pattern, which physicists later explained as a consequence of atomic structure. A similar story may be playing out in particle physics again today.

2. The 12 known elementary particles have their own repeating patterns, suggesting they are not truly fundamental but actually tiny balls containing smaller particles, which physicists tentatively call preons.

3. Other evidence argues against this possibility. The Large Hadron Collider at CERN, along with several lesser-known experiments, may finally settle the question.

The whole article is available at (http://www.nature.com/scientificamerican/journal/v307/n5/full/scientificamerican1112-36.html ).

There are major differences (at least four) between the preons/Rishons and the prequarks.

More details are available at http://www.prequark.org/ (no longer online).

One, The Preon model (done by Abdus Salam) which was expanded as Rishons model (mainly done by Haim Harari). This Rishons model is very similar to my Prequark model. It has sub-quarks (T, V): {T (Tohu which means "unformed" in Hebrew Genesis) and V ( Vohu which means "void" in Hebrew Genesis)}. But, Harari did not know what T is (just being unformed). On the other hand, the A (Angultron) is an innate angle, a base to calculate Weinberg angle and Alpha.

Two, the choosing of (T, V) as the bottom was ad hoc, a result of reverse-engineering. On the contrary, there is a very strong theoretical reason for where the BOTTOM is for G-theory.

In G-theory, the universe is ALL about computation, computable or non-computable. For computable, there is a TWO-code theorem. For non-computable, there are 4-color and 7-color theorems.

That is, the BOTTOM must be with two-codes. Any lower level under the two code will become TAUTOLOGY, just repeating itself.

Anything more than two codes (such as 6 quarks + 6 leptons) cannot be the BOTTOM.

Three, rishons (T or V) carry hypercolor to reproduce the quark color, but this set up renders the model non-renormalizable, quickly going into a big mess. So, it was abandoned on day one. On the other hand, prequarks (V or A) carry no color, and the quark color arises from the “prequark SEATs”. In short, Rishons model cannot work out a {neutron decay process} different from the SM process.

This is one of the key differences between prequark and (Rishons and SM).

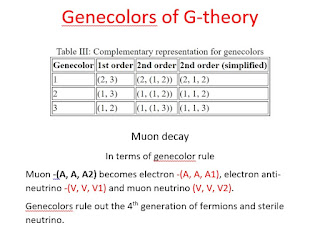

Four, Preon/Rishons model does not have Gene-colors.

More details are available at http://www.prequark.org/ (no longer online).